DataOps and DevOps are all about improving workflows. DevOps helps in faster and error-free software releases for developers. DataOps is to focus on the people that work with data, and make it easier to manage and share data between different groups. DevOps is for code and DataOps is for data. Both use automation and teamwork to speed things up. But they serve different purposes. DevOps builds and runs apps. DataOps makes sure the right data is ready when needed.

DataOps vs DevOps: Streamlining Data and Development

While both DataOps and DevOps seek efficiency in processes, they primarily target different scopes of management. Here is a quick comparison to help understand their unique roles.

| Feature | DataOps | DevOps |

|---|---|---|

| Definition | A set of practices that set to improve data analysis and management | A set of practices that combines software development and operations for best software output |

| Automation | ✓ | ✓ |

| Collaboration | ✓ | ✓ |

| Agile Methodology | ✓ | ✓ |

| Focus | Focuses on data analysis and management | Focuses on software development and IT operations |

| Tools and Technologies | Uses tools to help with data integration, data management, and data governance | Uses tools to help with continuous integration/deployment (CI/CD) |

| Data Complexity | Deals with complex, heterogeneous data, focuses on time-to-insight | Focuses on time-to-market, doesn’t deal with such complex heterogeneous data |

Detailed Analysis of the Differences of Dataops vs DevOps

DataOps and DevOps might sound similar, but they address different problems in data management and software development. Here is the detailed breakdown of what makes them different, and how each one affects business operations.

What Is DataOps?

DataOps refers to working with data. Data analysts and engineers work to gather data and transform analytical models and intelligent systems by using the data gathered. This helps them to attain actionable insights.

DataOps Principles

DataOps takes an all-rounded, holistic approach to how the data that the company manages is handled. The four fundamental ideas of DataOps are lean, product thinking, agile, and DevOps. There’s a DataOps manifesto that outlines the foundational ecosystem of DataOps. We’ve outlined five essential principles of DataOps below.

Automation

One of the major principles of DataOps is automation. Data monitoring, quality checks, and pipelines are all automated using ready-made templates. This speeds up the processes, making data management easier and quicker.

Data Quality

DataOps uses a technology-agnostic approach, which means the company can choose tools depending on the job. This helps data to be processed in the best possible way, ensuring data quality.

Collaboration and Continuous Integration

DataOps feature deterministic, probabilistic, and humanistic integration. Deterministic occurs when data gets matched based on predetermined rules, probabilistic occurs when data gets matched based on probability, and humanistic occurs when human experts match data. This fosters collaboration between different types of experts, data analysts, and engineers.

Table(s) In/ Table(s) Out Protocol

These protocols provide a way to simplify data integration, which happens because a well-defined interface for data integration is provided. This is beneficial in allowing a clear separation of responsibilities between different concerns of data management.

Open

DataOps prefers open-source tools, and open source is easier for enterprises to consume and deploy, driving better collaboration, best practices and regular communication. It provides flexibility on selecting the best tools too.

What Is DevOps?

DevOps combines development, engineering and operations to collaborate synergistically to make the development and release more efficient, productive and economical. DevOps lays special emphasis on delivery capability of development and it bridges the gap between the development and operations teams for more collaboration.

DevOps Principles

Just like DataOps, DevOps also has some core principles, and many of them are the same as the core principles of agile software development. Some of the core principles are analyzed below.

Automation

DevOps focuses on automating most processes to make them quicker, easier, and more efficient. The end-to-end processes of software development and operations are all automated. This helps software reach the delivery markets faster, and multiple layers of functional tests are applied to make sure the software reaches the highest levels of customer satisfaction.

Collaboration

To make the most successful product launches, the development and operations teams need to work simultaneously, which fosters immense collaboration between these two branches of the company.

Continuous Integration

To reduce the chances of blockages or significant server interruptions, DevOps follows continuous integration (CI). This means the code is fed to a central repository several times a day instead of fitting it all at once.

Continuous Improvement

Continuous Improvement is an agile methodology that ensures codes are updated as fast and frequently as possible, to reduce chances of errors or any bugs that may be on the software.

Continuous Delivery

Continuous delivery allows software developers and engineers to update codes quickly and frequently. This relies on manual code deployment compared to Continuous Deployment, which is more of an automated method. This helps to make sure codes are updated fast and frequently to enhance customer satisfaction.

Objectives and Focus Areas

DataOps

Objectives in Data Management

DataOps at its core is a data management framework. It’s a collaborative framework that helps to ensure communication, integration, and automation. DataOps helps with the problem of data silos.

The objective of DataOps is to ensure efficient data processing and analysis with the help of technology tools, and the pipeline of data monitoring, improvement, and feedback. This continuous process of monitoring, and applying machine learning and statistical methods, also helps with ensuring data quality and reliability.

Focus Areas

DataOps allows collaboration between different company teams and ensures data integration by using several integration methods: deterministic, probabilistic, and humanistic data integration, and automates data pipelines and workflows.

There’s also data monitoring with the help of statistical methods, using common metrics, feedback loops, and best practices. Additionally, there’s quality assurance of data since DataOps integrates tools and technologies for the best outcome, ensuring high-quality data.

DevOps

Objectives in Software Development

DevOps aims to create a sustainable infrastructure for software development and delivery. Which can help in speeding delivery software and improving development with cooperation operations.

A strong delivery pipeline of building, testing, and releasing software to the customers in a much faster way is enabled by DevOps through automation, continuous integration, improvement, deployment, and delivery. Not to mention, it continuously monitors the software with the help of a feedback loop.

Since the software is continuously being made and improved upon, this helps in greater collaboration between the development and operations teams.

Focus Areas

DevOps emphasises CI/CD (Continuous Integration and Continuous Deployment/Continuous Delivery). CI allows the merged change to be built in the main source of the code as regularly as possible. CD automates the distribution process without any human intervention. CD guarantees that each change flowing through the production pipeline is delivered to the clients.

The second notable subject of DevOps is automations; the whole process of development through delivery is automated to make deployment techniques fast, effective, and cheaper. For large-scale configuration, infrastructure as code (IaC) is performed — by writing configuration in tools like AWS CloudFormation, Chef, Puppet, etc. It assists in automating the deployment of infrastructural devices, such as servers.

Collaboration and Team Dynamics

DataOps

There is an emphasis on cross-functional collaboration in DataOps. To maximize efficiency in the data pipeline stages which constitute data ingestion, preparation, analysis, visualization, and delivery, there is constant collaboration between many teams.

Data engineers, data scientists, and operations teams all work together to integrate different tools and technologies and use common metrics, feedback loops, and best practices to get the most optimal results.

This communication is essential in breaking silos in data-related processes and facilitating experimentation.

DevOps

There is also an emphasis on cross-functional collaboration in DevOps. There is collaboration between development, operations, and quality assurance teams, which helps to leverage skill sets and experiences. Through shared goals and objectives, cross-training, and skill enhancement, the software development pipeline is made smooth and efficient.

This is essential in breaking silos in software development and delivery, because of CI/CD pipelines, joint collaborative efforts, and shared accountability through continuous feedback loops.

Tools and Technologies

DataOps

There are many great tools for data integration and automation. Astera is an automation and AI-based data integration platform, which helps with everything starting from data extraction to building data warehouses. Jitterbit allows companies to combine data from multiple sources and enables users to leverage AI features. Celigo is an integration platform as a service (iPaaS) that helps businesses automate tasks. Microsoft Power Flow is a cloud-based data automation tool.

Apache Airflow is a Python-based orchestration platform, where you can communicate with REST APIs, cloud platforms, messaging systems, etc. Kestra is also a great language-agnostic orchestration tool. SolarWinds Database Performance Analyzer and Database Performance Monitor are two of the finest data monitoring tools.

DevOps

There are plenty of open-source, cloud-based CI/CD tools that operate on various operating systems, are easy to use and set up, and offer free versions. Jenkins is an open-source, self-contained, Java-based program, that helps with continuous integration and deployment. Circle CI is also a CI/CD tool that integrates with BitBucket, GitHub, and GitHub enterprise and allows for greater automation across the software development pipeline.

Continuous Improvement

DataOps

Iterative data management is a continuous cycle of data planning, evaluation, analysis, implementation, and evaluation. This makes research and development more efficient, by incrementally improving development through the end of each iteration.

There is continuous improvement in data processes by establishing a governance framework, populating the data catalog, driving community collaboration, and monitoring and measuring the data. This monitoring leads to feedback loops and data pipeline optimization, where any problems with the data can be solved after each iteration of data processing.

DevOps

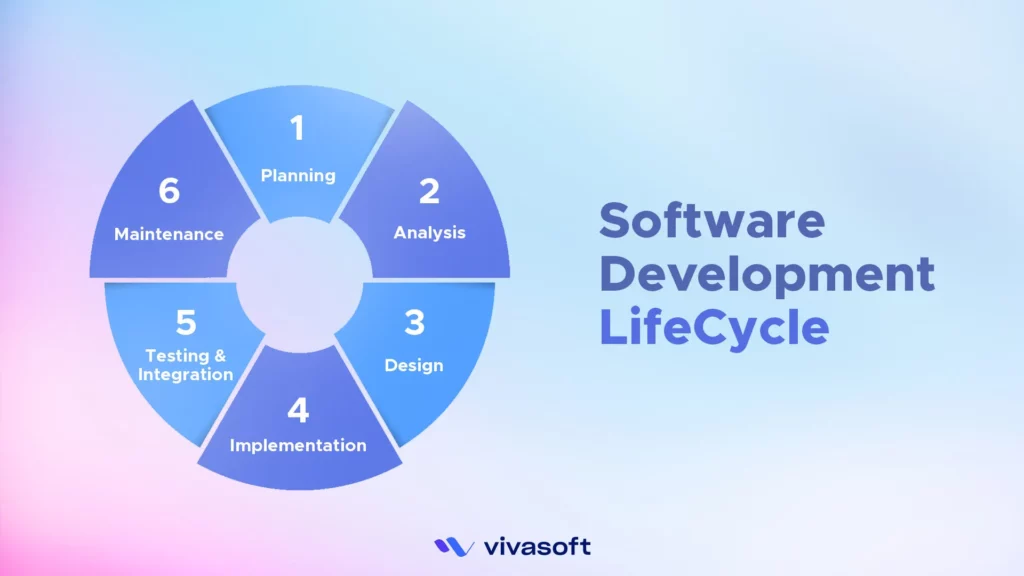

Iterative software development is a continuous cycle of software building, evaluation, analysis, and deployment. The software development life cycle is broken down and streamlined.

This is particularly important for continuous improvement in software development and delivery; the software is continuously planned, built, and tested continuously, and any bugs are improved over time. Then, the software goes through automated testing, it’s released and deployed, and then the feedback loop is kept in place for fixing any errors or bugs that may occur.

Challenges and Solutions

Common Challenges in DataOps

Data Quality and Consistency: Maintaining large amounts of data, and making sure of the data quality and consistency is a massive undertaking and one of the complexities of DataOps. To help solve these problems, there are multiple data quality and consistency tools available.

Managing Data Complexity: Data curation and management is a difficult task, especially because DataOps deals with complex, heterogeneous data sources. Managing data complexity is hard. However, there are data curation tools and other DataOps tools for management that are available at your disposal to help solve these issues.

Common Challenges in DevOps

Integration and Deployment Bottlenecks: Integration of tools from different domains can be difficult, and DevOps toolsets can be risky. Adopting a DevOps governance model and changing organizational structure can be difficult. However, these issues can be overcome by slow integration of the DevOps culture within the workplace, and the use of better CI/CD tools that help in automating tasks.

Balancing Speed and Stability: There’s a cultural challenge when shifting to DevOps, sometimes resulting in less speed and instability. There’s also the issue of making sure the product maintains quality, hence balancing speed and stability is an important consideration. These problems are also solved by a shifting mindset of culture within the organization, and slowly training the workforce into adopting agile software development methodologies.

Choosing the Right Approach

The methodology you choose will depend on the operations you want to streamline. You should prioritize DataOps if your focus is on data analytics and management. DataOps deals with data quality management, integration, data governance platforms, and when data is from complex, heterogeneous sources. If you need governing data, you are emphasizing data quality and security, and you are focusing on time-to-insight rather than time-to-market, you should focus on DataOps.

However, for the most comprehensive solutions, you should integrate DataOps and DevOps systems. Both of them have automation as a core principle, rely on collaboration, and employ agile methodology, continuous development, and quality assurance.

DataOps is useful during the extraction, curation, and analysis of data. This is likely to be useful when working with large datasets. While DevOps is most useful when releasing software to the market. Both of these methodologies work best in tandem, simultaneously. The most comprehensive advice is to use both of them.

Final Thoughts

Here we see that though DataOps and DevOps share the same principles and methodologies, some fundamental differences exist between the two concepts. DataOps is distinct from DevOps in that its focus is data analytics rather than software development. The tools and technologies involved are different, the data complexities are also different. And while DataOps is concerned with time-to-insight, DevOps is concerned with time-to-market.

This explains why there is no clear victor in the argument. Both of these strategies have their harms and benefits, which is why the best answer of all is to use both.

FAQ's

Which is better, DataOps or DevOps?

DataOps is concerned with effective data use while DevOps is about constantly serving up software. The decision can depend on the priority of the organization. However, a mix of these two can be good, which is called “DataDevOps”.

What are the commonalities between DataOps and DevOps?

DevOps and DataOps show the significance of teamwork and communication. Automation is a huge part of both approaches; it allows teams to be more efficient and consistent.

What challenges are associated with implementing DataOps?

DataOps implementation challenges are associated with data governance, compliance and scaling of data operations.

What challenges are associated with implementing DevOps?

Challenges for DevOps adoption may involve organisations refusing to accept change in traditional development methodology and managing a balance between speed and quality in delivering software solutions.

Can you provide examples of successful DataOps implementations?

Companies such as Netflix and Facebook have implemented DataOps to improve their data management, analytics and collaboration.